Today, a prediction about the timing of Android victory. But – more importantly – a discussion of the uses and perils of statistical extrapolation.

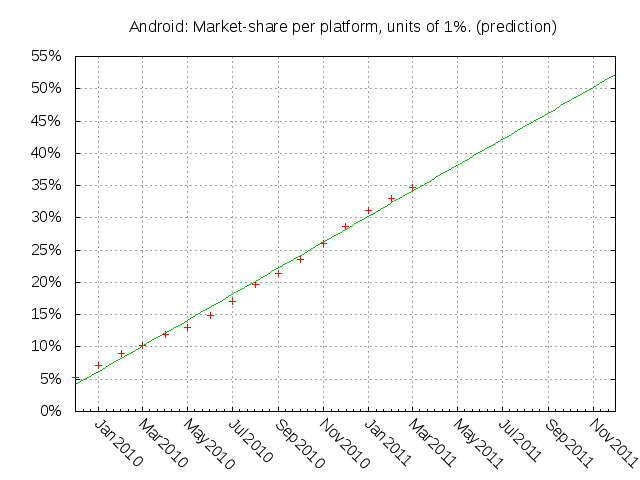

I’ve used gnuplot to do a linear regression against comScore’s history of Android market share and project it into the future. Here it is:

Looks very neat, doesn’t it? Shows Android crossing 50% U.S. market share right about the end of October. Happy news, if true.

But there are significant methodological issues about how far we should trust a graph like this. By exploring them, I hope to help my readers become a bit more informed about how to apply rational skepticism to statistical extrapolation. There’s an awful lot of lying with statistics going on out there, much of it on issues weightier than smartphone market share; it is good to learn how not to be fooled.

I will start by giving you the dead minimum you should accept for confidence in my statistical extrapolation – full access to my dataset and my analysis/visualization code. Deep suspicion would be justified if I did not. I could be hiding dishonest manipulation of the data, or I could simply have made mistakes. Without the ability to check my work, you can’t know – and if I denied you that ability, the safe bet would be that I have something to hide.

This may seem obvious, but a surprisingly large amount of science (especially politically-loaded science) is done under conditions of nondisclosure that could cover an awful lot of fimflam. Skepticism about such ‘science’ is not merely justified, it’s required – and the higher the public-policy stakes are, the more uncompromising the demand for full disclosure has to be.

But don’t forget that I am relying on comScore’s primary datasets and code, which I can’t see. The absolute most you can deduce from what I show you is that my extrapolation is correct if comScore’s numbers are correct, and your confidence in that can only be as strong as the value of the business comScore would lose if it got out that its numbers were erroneous or fudged.

But there are even more fundamental issues that still arise even if you assume that comScore and myself are both honest and without flaw.

One obvious reason to believe the graph is that the line of extrapolation looks like a pretty clean fit. Indeed, the residuals are quite small, and are comparable to the measurement errors one normally sees in large market surveys. But if we become too seduced by the goodness of the fit we risk missing a more fundamental question: what reason do we have for believing that a linear fit is appropriate?

Suppose we had a theoretical model of why people buy smartphones that predicted Android’s market share would rise linearly over time as y = ax + b, but the theory didn’t give us the coefficients a and b. The rough linearity of the observed data would confirm this theory; we would then have justification for doing a linear fit to the observed data to get a and b and using that to extrapolate into the future.

But this isn’t our situation. We have no theory of what drives smartphone marketshare, or at least not one that yields an equation. We have no justification for believing that market shares will tend to rise and fall linearly; in fact, we’ve already seen that over the same period RIM’s certainly does not.

We’re actually worse off than this, because we’ve seen growth curves in natural systems before and know that neat linearity is rare. The most likely model is that customers pick smartphones by what they see others buying, crowdsourcing the job of evaluating products to each other and leading to a growth pattern that looks like the spread of a contagious disease. Growth by contagion in bounded systems tends to be not linear but logistic.

There is a theory that could explain linear growth, however. That is this: customers would be buying Androids faster if they could, but the available supply is only growing linearly (because it takes constant dollars for each additive increment of manufacturing capacity). We actually get some support for this from the userbase growth graph, which could be well approximated by two linear segments joined by a slight change in slope.

In a slightly more elaborate version of such a theory, J. Random Consumer has set a strike price for getting an Android and is buying as soon as his strike price is crossed going downward. The supply of Androids available at any fixed price X, and thus the number of buyers, will also tend to rise proportionately to total manufacturing capacity, and thus to rise linearly.

Now we have justification for the following statement: If comScore’s numbers are accurate, and Android sales are mainly constrained by supply chain and manufacturing capacity, then a linear fit is appropriate, and it’s highly likely that Android will cross 50% share in late October.

Other theoretical models that predict linear growth of sales could be plugged in here. The point is that you really need to have such a model – and confirmation of it that is to some degree independent of a pretty graph – before a statistically-based forecast can be any better than numbers pulled out of a hat. Without such a model, applying linear regression or any other sort of curve fit imposes a shape that may look nice but will have no connection to causal reality.

Of course it could be that the linearity is an illusion. The Android userbase growth graph actually looks like it could be power-law growth. If that’s true, my linear prediction will be too conservative. Alternatively, growth might be about to nose over into the saturation part of a logistic curve. There’s simply no way to know this from the data; you have to have a model underlying your curve-fit, an independently confirmed theory about the future.

Such a theory needn’t be super-elaborate to make useful predictions. For example, if we consider that fewer than 50% of cellphone users have converted to smartphones, its seems much less likely that smartphone sales are going to reach saturation in the next year. Under these conditions, the entire Android army would have to be afflicted by some Android-specific design or execution failure for sales growth to go sublinear. (Yes, this is possible; worst case, a junk-patent lawsuit leading to a temporary restraining order could be pretty bad in the U.S. market, even if it had little effect intertnationally.)

Let’s review our premises here:

- ESR has conducted an honest analysis which is not significantly compromised by errors.

- comScore’s numbers are accurate.

- Android sales growth is constrained primarily by supply or some unknown input with linear growth.

- The Android Army is not going to be hit by some kind of huge gotcha like a massive design failure or patent TRO.

My point right now isn’t to argue for any of these premises, just to point out how much model construction has to go on before a curve-fit means anything real. The data is what the data is, but the curve it’s fit to is laden with assumptions. Whenever someone throws a curve at you without being explicit about the assumptions and their failure points, be very, very wary of it.

But-but-but it’s so ELEGANT! How could a perfect linear-regression fit like that possibly NOT be true? [/teleology]

As we can plainly see here, Android market share will hit 120% by the end of 2014.

>As we can plainly see here, Android market share will hit 120% by the end of 2014.

The question, of course, is not whether growth will go logistic but when.

@esr :

I did a quick spline fit of the android data set last night. It “strongly agrees” with your theory that that the android growth is linear largely perhaps due to a “suppression” of some sort.

I did not do any further analysis other than basic spline fit (<10 lines of code). Several intervals (e.g. 09/2009 – 01/2011) show exponential type growth on my plot. Only for it to be suppressed into linear growth in the quarters that follow (01/2011 – 05/2011). You can tell exponential from linear just by paying attention to the order of polynomial threading through the data. What the spline show is exponential bursts followed by linear suppressions after which the exponential pattern resumes.

>I did a quick spline fit of the android data set last night.

I am still dubious about applying spline-fitting to this kind of data, but your suggestion that we are seeing exponential microbursts suppressed to overall linear growth seems quite plausible. The model that would go with this is one of serial breakouts into different user populations, each breakout showing power-law growth until price and supply constraints are reached. Well spotted!

Not trying to shift any goalposts here, but I think it’s interesting that Apple’s share of the profits is showing similar linear-looking growth. According to a quick eyeball guess, it looks like their share of the profits will hit 100% in Q1 2013, around the same time that Android hits 100% of the market share. How can this happen? Obviously Apple will sell one single handset for a billion dollars, while all the other companies will be selling handsets as loss leaders.

>Apple’s share of the profits is showing similar linear-looking growth

Well, that would make sense if they are also supply-constrained, wouldn’t it?

Joking aside, I would like it a lot if handset manufacturers sold their phones as loss leaders, following the videogame console model more closely (rather than selling them at full price to telcos who then sell them on as loss leaders)

Several benefits

1. This model favours making one ultra-premium model and updating it only once every 5-6 years, in order to benefit from commoditization of components. Which is nice if you’re a software developer since there is some degree of hardware standardization with or without vendor lockin.

2. It also forces the wireless operators to be more honest. If you can buy a great handset for cheap, nobody would buy a network-locked handset at any price.

3. Cuts out the shitty-phone segment of the market.

>I would like it a lot if handset manufacturers sold their phones as loss leaders

How the hell could they possibly do this? The handset makers have no equivalent of the carriers’ contract system; there’s nothing for the loss to lead to.

>If you can buy a great handset for cheap, nobody would buy a network-locked handset at any price.

That’s coming, and it’s coming fast.

I haven’t really studied this stuff, but since the ordinate is market share, rather than an absolute number, it would seem to be entirely possible for Android sales to grow exponentially, with a corresponding exponential total market growth that would lead to an exponential market share growth with a small exponent that looks linear near the start.

share = exp(at)/exp(bt) = exp(at-bt) ~ 1 + (b-a)t + …

@Bennett:

How exactly will hardware commoditization of components (which is coming) good for apple ? If apple’s software (iOS) was so vastly superior to android one can buy your theory about the one ultra-premium model. Otherwise apple’s gadget is nothing but a shiny glossy fashion accessory and that is what they’re charging the premium for.

The phone makers, making it easier to root, is only going to be bad for apple. The model that Patrick Maupin posted earlier (phone makers charging you for a re-install, while at the same time making it easy to root) is a model that is not good for apple either. It’s good for us consumers, and the phone makers.

What your model fails to take into account is that iPhone sales will soon begin to overtake Android because the iPhone is NOW AVAILABLE IN TWO DIFFERENT COLORS!

How can Google hope to compete with such divinely-inspired innovation?

>How the hell could they possibly do this? The handset makers have no equivalent of the carriers’ contract system; there’s nothing for the loss to led to.

In the videogame market, the answer is twofold: you make a profit on software from day 1, and you make a profit on hardware late in the product cycle, when the components are cheaper. Would people accept a change to a 5-year product refresh cycle in the phone market? Probably not.

>How exactly will hardware commoditization of components (which is coming) good for apple ?

Who said anything about it being good for Apple?

@Bennett:

> Who said anything about it being good for Apple?

You did. This is what you said: “This model favours making one ultra-premium model and updating it only once every 5-6 years, in order to benefit from commoditization of components.”

Commoditization happens much faster than the time intervals that you’re giving. Either you have to slow down commoditization, or your premium brand has to refresh more frequently. Commoditization creates fast changing dynamics that are diametrically opposed to the stagnant culture of one “premium brand”.

>The data is what the data is, but the curve it’s fit to is laden with assumptions.

the way in which this is wrong is that most of the social sciences (sociology, social psychology etc.) use graphs _precisely_ in order to avoid formulating explicit generative models. In my field (linguistics) it has become standard practice for subfileds like psycho-linguistics to _only_ show graphs as evidence and not reveal even a single example of the original data. Attempts to discuss this practice are quickly identified and refused as a move to ‘ideologise’ the field, ‘impose an abstrat theoretical explanation’ etc.

Whole academic disciplines are being built without any causal theory behind it, no matter how superficial. Sounds crazy, but it’s true.

@Ignatius T Foobar:

And remember, with iPods, they added more colors later, so it’s entirely possible they still have this trick up their sleeve.

@Bennett:

Personally, I hate the razor/razorblade strategy for technology, because it leads to greedy corporations buying stupid laws and engaging in stupid technical measures to prevent arbitrage.

Not having a causal theory actually isn’t all that bad; physics has basically been in that spot since Newton defined gravity as action-at-a-distance, and it only got worse when we realized 75 years ago that all forces are actions at a distance. What we need is a predictive theory—and it seems like the “social sciences” don’t even bother with those.

@Federico:

I think Mike E and Bennett aptly demonstrated what’s wrong with that strategy in their earlier jokes about the ridiculous conclusions you can come up with using this graph.

>You did. This is what you said: “This model favours making one ultra-premium model and updating it only once every 5-6 years, in order to benefit from commoditization of components.”

I said this would be nice for software developers, and I also implied it might be nice for consumers. I think it’s clear it’s not better for handset manufacturers. But I don’t actually care about the fortunes of large corporations, believe it or not.

We have a compellingly linear fit, yet no theory that predicts a linear fit.

Plausibly most smartphone production is in fact in China, that everyone who produces phones has outsourced 95% of their stuff to China.

Plausibly a lot of Chinese want to cut out the middleman, or rather go with the cheapest middleman, which is google/android, but they have contracts to honor, assembly lines to reconfigure, redesigns, market testing, and so on and so forth, so cannot turn on a dime.

This constrains our growth curve to start at zero, and end at very close to 100% after a finite and not very long time.

A logistic curve has an initial slow growth phase, and a final slow growth phase. If our growth curve reflects a choice made near simultaneously by all or almost all suppliers, the curve will not be logistic.

The initial part of the logistic curve will be cut off, because these are existing manufacturers switching. Their capacity to produce cell phones is probably following a logistic curve, but which cell phone they choose to produce will not follow a logistic curve.

It actually looks like a pretty bad fit to me. Look at the residuals: the first four are positive, then next eight are all negative, and then the last four are all positive.

Did you consider running a logistic regression?

>It actually looks like a pretty bad fit to me. Look at the residuals: the first four are positive, then next eight are all negative, and then the last four are all positive.

But all are within the normal instrumental error range for surveys of this kind, which is about 3%. If, when I update this plot, I see a maximum residual above the noise range, I’ll pursue other fit possibilities more seriously.

>Did you consider running a logistic regression?

I considered fitting to a power law or logistic regression, but I’m not expert enough with the equational notation used by gnuplot’s fit feature yet – I don’t even know if ‘e’ is ‘e’.

@TimP

Indeed, what I meant is that some people use graphs to smuggle their own assumptions, as Eric points out, but many others, including many academics, use graphs to avoid making any assumption whatsoever.

The degree to which graphs have been used in some academic circles to avoid any discussion about theoretical premises, falsifiability, predictive power etc. is truly amazing …

So what are comScore hiding?

>So what are comScore hiding?

I don’t know. But at least they stand to lose heavily from sloppiness or fraud – nobody’s going to run to provide political cover for them. That’s some reason for confidence, if not as much as I would like.

an intersting related topic (old news, though):

http://www.nytimes.com/2010/04/27/world/27powerpoint.html?hp

[power point] “It’s dangerous because it can create the illusion of understanding and the illusion of control,” General McMaster said in a telephone interview afterward. “Some problems in the world are not bullet-izable.”

@pete:

> So what are comScore hiding?

Probably nothing. They make their money by selling reports, and advertise by giving a little away for free.

@Federico:

The powerpoint disease is hardly limited to the military. The number one predictor of career success in corporate environments is one’s capacity at cranking out powerpoints and excel sheets.

Those who have better things to do (ie solve real problems) are relegated to the cubicles.

“The question, of course, is not whether growth will go logistic but when.”

Interesting graph. As far as this goes, I think you already made a prediction in one of your previous articles. Apple and Android are going to keep growing at the cost of RIMs downfall, whereas the others are going to remain largely static in their userbase. I think that after a while, Android’s userbase (in terms of numbers) is going to increase quadratically or maybe even close to exponentially until RIMs share drops low enough. Unless of course, RIM comes up with something great.

Just my 2 cents. :)

Google apparently made a stupid security mistake with authentication tokens:

http://www.theregister.co.uk/2011/05/16/android_impersonation_attacks/

This is what might turn out to be its Achilles’ heel.

Fragmentation is a red herring. It wouldn’t matter if there were zero Android fragmentation if none of the devices were easily upgradable. I have read that google has managed to push some security fixes related to the market. It will be interesting to see if they have a general way of upgrading security issues (which might be difficult, given that each vendor does its own OS build), or if they come up with some kludge to fix this, or if (as the article suggests) it waits for a full install of a new version of the OS.

Or maybe its all part of their master plan to wrest control back from the carriers. Let the carriers feel so much pain they ask to be relieved of OS update duty.

>Google apparently made a stupid security mistake with authentication tokens:

On the one hand, plausible bug. On the other hand, it’s the Register, which is…er, not above exaggerating for effect. I’d wait on coverage from a more reliable source before making projections.

@Patrick Maupin:

1. Serious for those who understand security.

2. Less serious to the public at large and in terms of the PR damage it can cause.

3. No where near as serious as the apple spying on you part in terms of PR damage.

So do sin(x) where x is very small and x^3 and the logistic function around 0. Just because something looks linear at a particular scale doesn’t mean it’s really linear.

>So do sin(x) where x is very small and x^3 and the logistic function around 0. Just because something looks linear at a particular scale doesn’t mean it’s really linear.

That is very true, and should be interpreted as reinforcement for one of my basic points. Being able to draw a curve through a dataset tells you nothing predictive unless you have a generative model about why that curve is that shape.

@esr:

> On the other hand, it’s the Register, which is…er, not above exaggerating for effect.

Understood, although this is, as you say, a “plausible bug,” which got me thinking…

> I’d wait on coverage from a more reliable source before making projections.

We know that google can disable bad apps from the store, but that’s presumably because of the app store manager app. What else can they or can’t they do? We don’t know yet. I’m certainly engaging in idle speculations, but I didn’t mean to be making projections. Well, maybe one projection (see below).

@uma:

> 2. Less serious to the public at large and in terms of the PR damage it can cause.

In a vacuum, this would certainly be a true statement. But this isn’t exactly a vacuum; if there is any “there” there, expect Apple to attempt to exploit it, and whether or not there is any “there” there, expect certain parts of the Apple blogosphere to erupt violently.

A bit more info on the auth token exploit here:

http://www.uni-ulm.de/en/in/mi/staff/koenings/catching-authtokens.html

And at the bottom of that article is a link to something useful and interesting I missed from Google I/O last week:

http://www.wired.com/gadgetlab/2011/05/android-software-updates/

Google must feel they’re operating from a position of strength now. And 18 months might give them enough time to create or port a decent package manager for the OS components.

Terrific article. More people need to understand that numbers are not magic and are not brought down from on high. They can be misinterpreted, or “spun” on purpose.

I was fortunate enough to read a book in high school titled “Math Without Tears”, now long out of print. Most of the book was utterly forgettable, but there was one chapter that was all about lying with graphs.

It walked the reader through a fictional business case of an employee asked to make charts that showed that the company was doing well (for the stockholder’s meeting), that it was doing poorly (for the union meeting, using the same data), and that its ratio of product manufactured to employees was rising and thus the company was becoming more efficient (when it was obvious from inspection of the numeric data that the reverse was true).

I learned from that chapter never to pay attention to the slope of the line of any type of plot, but to look seriously at the actual numbers; to be doubly careful of graphs where one or both axes don’t start at zero; and to watch out for cases where the quantity graphed is not really the correct metric to use. And I’ve never forgotten it, even decades later.

Bennett,

> But I don’t actually care about the fortunes of large corporations, believe it or not.

You should, if they sell things that you want to buy, or if they have already sold you things for which you want support. You should want all your business partners (that is, people with whom you do business) to prosper. That is the best way for you to succeed as well. Don’t you care about your own wellbeing?

Yours,

Tom

It’s worth mentioning that the real refresh rate on cellphones (other than minor tweaks to existing designs) is still at least 2 years and probably closer to 3. Companies that seem to refresh every year either had multiple teams working in parallel pipelines or made a nearly insignificant change to an existing product – swap in a slightly different camera, tweak the shape of the case or some such.

When you decide to make a brand new cellphone design, you’re making all sorts of bets. Pick your release window and working back from that you have all these components – software and hardware and radio stuff – that all have to be ready in the up-to-date latest version you chose to standardize on at the same time, just in time for you to put it all together, do your systems testing and get all the regulatory clearances. And they never *are* all ready at the exact time you need them, so either you have to use what *is* ready on time or you have to slip the schedule. If you slip the schedule there’s a big chance your product won’t be competitive any more – it won’t be the first or the best in its category so you don’t get the free press or good press or ability to charge a luxury price that comes from those qualities. So to make your window, you usually compromise on up-to-date-ness and throw a few features overboard, possibly leaving in a few hooks that might make it easier to add them later.

So even when companies seem to be coming out with a product each year, their real OODA loop is a lot longer than that. Which would be good news for Apple if they could keep up the “Fire and Motion” dynamic that got them here. Hey, remember when all respectable smartphones had a stylus and lots of buttons? If Apple could just keep making changes like *that* every few years – requiring the whole industry to change something fundamental – then they’d be in great shape. Because the *good* versions of competitive products that match their new feature set take 2-3 years to arrive, by which time Apple could have moved the goalpost somewhere else again. (You can see this most clearly now in the tablet market, where there *are* competitors one year in, but they’re not very good yet. There’s a lot of “give ’em 6 months and they might have something!” in the reviews so far.)

Imagine Steve getting up on stage at the iPhone5 event and saying “you know what? We’ve changed our mind – we’re bringing back the stylus!” :-)

Anyway, long development cycles can make for wild swings in fortune. It’s too soon even to count out all of the currently flailing competitors. Anyone with the money to stay in the game might have more good cards yet to play.

Predictions: the next round of tablets will finally be pretty good, the next iPhone will indeed be nearly indistinguishable from the current one, Android phones will continue to feel rushed to market with already-obsolete software…and though Android will do well in the long run, it’ll always take longer than esr predicts. So I guess I’ll take the “under” on “50% share by Halloween”.

And this is precisely the issue with climate “science.” The data was hidden, FOI requests denied, outsiders mocked, there wasn’t even a market to apply pressure to the bottom line – it was endless grant-whoring by the bureaucratic machine using tax money. Furthermore, the models thus created only retro-fitted past data and that too not perfectly! Future predictions performed worse than chance! You have the gall to notice all this, you filthy peasant? GLOBAL WARMING DENIER! DENIER DENIER DENIER

I would never extrapolate on market share. Too many constraints (0%/100%, all have to add to 100%). However, you can transform the percentages into a infinite scale (bilinear transformation etc)

If you would go to actual handsets on a log(#) vs time scale you can do intersting things. One is to determine if there is one brand that drives total market growth. The smartphone market was started by Apple, but is it now driven by Android? Supply side constraints should be visible in the absolute numbers shipped.

In an earlier comment there was the suggestion that market share was linked to the number of available handsets in price brackets under the assumption that users are brand agnostic.

Furthermore, we can assume that consumers buy after having seen a smartphone. Which would lead to a model from epidemiology (infection spread). These go logistic.

>but many others, including many academics, use graphs to avoid making any assumption whatsoever.

Sorry, but this is wrong. All graphs smuggle in their creators’ assumptions. And without providing concrete data points, the graphs are nothing but their creators’ unsupported claims – either their assumptions or opinions (or out-and-out lies).

According to ESR, you’re out of date regarding android lifecycles.

>You should, if they sell things that you want to buy, or if they have already sold you things for which you want support. You should want all your business partners (that is, people with whom you do business) to prosper. That is the best way for you to succeed as well. Don’t you care about your own wellbeing?

Yikes. You know, I think I will probably be ok if Apple goes bankrupt, and I say this as someone with multiple Apple products, and several iOS apps on sale.

In NoWin phone News

Many Rumors. It seems that MS cannot find anyone to produce WP7 phones, so they will start making them themselves using Nokia. What comes beyond desperation? Panic? In short their famous war chest will be empty.

On the other hand I actually do not see the point. MS already pwned Nokia. Why through more money at them? Or maybe Nokia wants to jump the WP7 ship and MS will have to buy all of it to get their WP7 phones?

First Skype, and now is Microsoft poised to buy Nokia?

http://www.techflash.com/seattle/2011/05/first-skype-now-nokia-for-microsoft.html

>>but many others, including many academics, use graphs to avoid making any assumption whatsoever.

>Sorry, but this is wrong. All graphs smuggle in their creators’ assumptions

no – but I realise it is something difficult to imagine for rational people who expect ‘scientists’ to be committed to explicit explicative theories about facts.

What I am talking about is a vast area of the soft sciences, sociology and especially psychology, whose practicioners do not know anything – literally – about falsifiability, explicative power, predictions etc, who are scared to death of the increasing competition coming from the hard nuerosciences, and are therefore desperate to ‘look’ scientific, while at the same time refusing those basic premises of rational discourse that would clearly show that what they say is, basically, a word salad.

Hence the need for graphs, charts, statiscal methods etc. I have seen refined statiscal methods being used on detailed questionnaires gathered from large samples of informants (300 – 500), to ‘test’ the ‘hypotheses’ about what type of human being do people think of when they think of whole nations as single individuals, and then argue that the results ‘prove’ that italians, for instance, think of the us as a man, while britons think of ‘it’ as a woman.

Believe me, none of them really believes this nonsense. The only assumption behind these ‘scientific’ graphs is ‘please allow me to remain in my nice academic position!”

For some reason, I do not think you both mean what you think each other means by ‘assumption’.

@Frederico

“no – but I realise it is something difficult to imagine for rational people who expect ‘scientists’ to be committed to explicit explicative theories about facts.”

There is this funny thing about facts. You need a theory to know what facts are. You need a theory about tides to appreciate that the phases of the moon are relevant facts. In most other sciences, the phase of the moon is not a fact. Except when you are doing certain measurements that are so sensitive, that the position of the moon actually does influence your measurements.

These soft sciences have the problem that they have observations, but no facts. If anyone feels compelled to show them how to make a good theory, please feel free to help. The stuff is really baffling in complexity. To start with linguistics, how language is learned is still a mystery, as is how languages evolve. There are only incomplete theories about that, and even less “facts”. It does not help that everyone seems to think they “know” how it is because they learned a language once.

And a graph is part of your argumentation, just as the text. It does not “prove” anything, it only shows you some features of the data. You should never view a graph as evidence. It is an illustration to make a point. Statistics are there to point out facts about the data which can be illustrated by the graph. It is the statistics which are informative, not the graph.

(and there is a very complex relation between statistics and proof, and without a theory, you cannot have a proof)

The quotable Eric Raymond:

“Skepticism about such ‘science’ is not merely justified, it’s required – and the higher the public-policy stakes are, the more uncompromising the demand for full disclosure has to be.”

Looks like something that would fit neatly within the Heinlein quotes at the top of your blog pages. Request permission to tag it in my emails & attribute it to you.

ESR says: Granted.

>>Apple’s share of the profits is showing similar linear-looking growth

>

> Well, that would make sense if they are also supply-constrained, wouldn’t it?

It also means that Android isn’t taking any market share from iPhone (never mind iOS).

>It also means that Android isn’t taking any market share from iPhone (never mind iOS)

Doesn’t follow. It could well be the case that both product lines are supply-constrained but competing for the same customer base. So, for example, imagine that J. Random Consumer knows he’s making a long-term commitment to one app ecosystem; if not enough Androids are available at his threshold price, he might refuse to buy an iPhone that is below his threshold price anyway in order to avoid getting locked into what he judges is the wrong one. Or it might work the other way around.

Now, in fact, I don’t think Android and Apple are yet competing head-to-head nearly as much as they are competing for dumbphone conversions. So your claim is accidentally mostly true, but not a justified inference from “Android is supply constrained”.

If only the wider public had even half this level of understanding about the limitations of statistical analysis, or at the very least, reporters and their editors.

Agreed. Everyone who votes should take — and pass — at least two collegiate courses in statistical analysis and reporting. Then they’ll have no excuse for not knowing when they’re being lied to through the use of statistics.

>Agreed. Everyone who votes should take — and pass — at least two collegiate courses in statistical analysis and reporting.

I’m sure you were being tongue-in-cheek, but it is worth noting that I’ve never had a course in statistics. I learned how to be properly skeptical about statistical extrapolation on my own, simply by paying attention to obvious abuses and thinking about the problem.

“In the videogame market, the answer is twofold: you make a profit on software from day 1, and you make a profit on hardware late in the product cycle, when the components are cheaper.”

Actually, you could also build a Wii, gain massive userbase, be profitable on hardware from day one, and make a killing. Just sayin’.

> Everyone who votes should take — and pass — at least two collegiate courses in statistical analysis and reporting. Then they’ll have no excuse for not knowing when they’re being lied to through the use of statistics.

I don’t like tests which limit the franchise.

Yours,

Tom

Even if the bug is all it is cracked up to be, Google should be able to push a fix regardless of what the manufacturers and carriers do. So far as I can tell, this is a problem with GMail, Google Calendar, etc. Google can (and has) pushed upgrades to these sorts of applications (most notably GMail and Google Maps) through the Android Market. I can easily see a scenario where the fix is cleaner with an OS upgrade (sharing more of the relevant code and the like), but where it is the app choosing what transport to use for the authentication token (i.e. http vs https) it should be fixable at the app level as well.

I don’t think that article shows what he thinks it does. The chart referenced was of the time between a new android release and the release of a phone “based on it”; that would tend to decline simply from more phones coming out. With more phones being released per unit time, the chances are better that some OS update would happen to come at a convenient time for SOME phone’s development cycle. (Yes, it’ll also get easier to update as the phones approach some new standard physical design and as the OS settles down into more incremental improvements, but then it’ll increase again any time those change.) The fundamental flaw in the chart, the article, and esr’s take on it is to assume that the development cycle *starts* with the release of new android software. It doesn’t. Software is the very last thing that gets finalized. Companies start hardware development at least a year or two earlier using some earlier version of the software or some in-house approximation, then once the hardware gets to a certain stage they start to finalize the software based on what’s actually ready. If there were new Android phones coming out every single day. the delay between a new android version and a phone based on it might be *exactly* the length of the software final-integration-and-test-phase but that phase still wouldn’t be a very large fraction of the overall production process.

What this means is that when Samsung does really well, it’s because the phone designs they *already had in progress* were a pretty good match for the OS and for the needs of the customer – it was easier for them to adapt to the new environment than some of their competitors who made bets that didn’t pay off as well. Samsung in recent years has made a lot of great phones that missed their market window and never saw the light of day; it’s nice to see them finally catch a break. (The OS was clearly the worst feature of their Windows Mobile “smart” phones and Symbian “feature” phones.)

In short, you’ll make more accurate predictions if you assume the average *development* cycle is at least three times the lags in this chart. I’m sure it’s gotten faster than it was in late 2007 (the last time I was actively involved with the cellphone industry), but it’s probably not *that* much faster. OS integration time was never the chief gating factor on how fast you could make a cellphone. Heck, the time necessary to test software components has probably *increased* with the recent development of an app “ecosystem”.

>I don’t think that article shows what he thinks it does.

You misunderstood my point, probably because you slightly misunderstood the article I was referencing. No blame for that, however; looking back at it, it was a reasonable mistake to make, because the labeling on the chart was rather confusing. What I thought the article showed was not particularly that the time from an Android software release to phones implementing it was decreasing, but that the product lifetimes of Android-capable handsets are decreasing (more or less independently of the software release cycle).

We can’t expect voters to think! Why, they might stop voting for the same

moronspoliticians over and over! :)Even if I were being serious, you have enough understanding of math to understand the basic statistical analysis one needs to function properly in society. It’s just too bad that you happen to be in the minority.

My annual pitch for Edward Tufte’s “The Visual Display of Quantitative Data” goes here.

For what I do (design boardgames), this is a book that merits an annual re-read, much like Strunk & White.

The three subsequent volumes he sells are, in my experience, rehashes of the information in VDQI, but with more examples. I have them because I took the class he runs – it’s worth the money if there’s a session near you.

Reading the article more closely I have to question some other parts. Consider this bit:

Um, yeah. Not so much, really. If you’re any of those four in, say, 2006, for most “smartphones” or “feature phones” you do *not* design your own OS. You license the OS from Microsoft or Symbian. And probably license the chipset from TI.

The OS work is pretty much the same for Android as it would have been for Symbian or WinMobile. Using Android gets you a *better* OS and a cheaper one – saving you $10/phone (Winmobile) or $5/phone (Symbian) in licensing fees – but it doesn’t save you the time or dollar cost of developing your *own* OS because you were never doing that in the first place. The people who *were* developing their own OS – Nokia and Microsoft – are the ones who might potentially realize that level of savings by switching, but they haven’t done so. In short, Android has reduced cost, but not time-to-market.

The system-on-a-chip part is true – TI and others kept integrating more stuff into the standard board to the point that you could just order one chip and pick from a menu what features you want it to have – with a lot of stuff that had previously been done in software or in external chips moving into ROM. But that trend started a long time ago and didn’t really have much to do with Android per se. A general standardization of the industry around fewer OS versions might help simplify the architecture, but this was happening before Android came along – it’s just that pre-android, the system-on-a-chip you’d buy (say, a TI OMAP chip) would be expected to use Symbian (ugh).

>In short, Android has reduced cost, but not time-to-market.

I think you’re mistaken in this. Increasingly, handsets and tablets are designed by minor tweaks to a reference-board design originated by a chip vendor (such as Qualcomm) which rightly calculates that it can get a lot more design wins by minimizing customer NRE. Thus, more and more of the porting work is being pushed back to the people designing those reference boards. This really does pull down the handset maker’s NRE and time to market.

> Increasingly, handsets and tablets are designed by minor tweaks to a reference-board design originated by a chip vendor (such as Qualcomm) which rightly calculates that it can get a lot more design wins by minimizing customer NRE.

This is definately the case. This change in the industry – in the dumbphone segment – happened > 4 yrs ago. It is all about turn-key solutions these days from the likes of qualcomm, infineon, TI etc. Many chip makers have essentially become vendors for qualcomm so that they can bundle up their solutions (e.g. in power management, connectivity etc) in the qualcomm turn key solution.

@ESR

If you ever want to do real statisics, you should switch to R.

Right. I was working at Beatnik (a supplier of software components to cellphone manufacturers) through that transition. During the years when I was testing it, our music engine moved from a block of code you get from Beatnik to install on a disk image to a block of code printed into the same chip with your OS and all the encoding/decoding circuitry – you pay the chip vendor an extra few cents per phone if you’re gonna use it and he passes the money along, just like he would pass Symbian their $2.50.

I agree that phones (smart, dumb and “feature”) and tablets have moved towards standardized highly concentrated chip designs where a lot of the integration is done on the chip/board level and not so much by the phone maker. I agree that this change has sped up the development cycle. I disagree that Android had much to do with that transition – it was happening with or without it. Android has benefited from that transition since that made it possible for the universe of phones out there to churn a little faster – but has not significantly *contributed* to it. It just happened to be around not long after it started time.

In fact, if tomorrow Microsoft came out with a version of Phone 7 that was somehow *really good*, good enough to be *worth* their ridiculous licensing fees, this exact same trend would make it possible for everybody to switch *back* away from Android about as fast as they switched to it. HTC would only have to check the “Windows” box instead of the “Android” box when they order their parts, and they could have a family of windows devices at relatively low cost in a small amount of time.

>I disagree that Android had much to do with that transition – it was happening with or without it. Android has benefited from that transition since that made it possible for the universe of phones out there to churn a little faster – but has not significantly *contributed* to it.

Qualcomm and Nvidia are set to ship SoCs for smartphones and tablets precisely because they have confidence that there will be a large, multi-vendor and multi-customer market for them. That means the existence of Android – specifically its open-source nature – is now financially driving the hardware-integration side.

Now, if you want to argue that Android itself was not specifically necessary to get us to this stage of ephemeralization, I won’t disagree. The necessary condition was an open-source platform – otherwise the economics wouldn’t have worked. It could have been WebOS or MeeGo, had they achieved early critical mass. But in fact it was Android, so arguing that Android isn’t contributing is specious.

This discussion reminds me of a discussion I had recently with a guy who is a professor of environmental science at a local, prestigious university. Our discussion wandered from global warming to a more general one on statistics and socialism. He lectured me that statistics needed an expert to handle them correctly (which I agree with BTW). He told me that he had taught several courses on statistics.

Then he went on to tell me how bad the USA was because all those socialist European countries always score higher on league tables on happiness. I said that those tables were obviously biased to the particular definition of “happiness” chosen. He lectured me on how carefully designed these studies are, and how experts in statistics designed them.

“These aren’t opinions Jessica,” he told me, “numbers don’t lie.”

It would be funny if it were not for the fact that this “professor” is filling a generation’s head with mush.

Jessica: If numbers don’t lie, here’s a gendanken-experiment.

If those countries are happier in an objective way, how much immigration do they get, relative to the United States? People will migrate from places where they are unhappy to places where they can be happier.

If they close their borders, or put means testing on immigrants, then that happiness is being withheld from people who are forbidden immigration visa because they don’t meet the arbitrary standards of white male hegemons, or can’t pay enough filthy lucre to get a visa.

Well before Android existed, the standard licensing agreements were made to work so that you can legally make a chips with a *bunch* of OSes (and other components) on it but people only have to pay for what they actually use. So there’s no extra cost to having an extra proprietary OS or two around – you only pay the licensing fee if you need that particular product, and the chip has oodles of extra space so there’s no harm in having it there. You do need a lot of vendors to ship stuff using your chip but you don’t need a lot of vendors using *the same* OS and it doesn’t need to be open source.

The Texas Instruments OMAP series typically shipped with both Linux and Symbian. Some people rolled their own solution but most went with Symbian.

The first phone that shipped using the Qualcomm Snapdragon was running Windows Mobile, not Android. There was nothing about the development of that chip that required it to be used to run Android or prevented it from being used to run other OSes. Qualcomm and Nvidia would have been just as happy selling that chip into a “large, multi-vendor and multi-customer market” of Windows Mobile phones, if that had been the way the market went.

Why do you think “The necessary condition was an open-source platform”? As near as I can tell, the people who wrote those breathless articles about Snapdragon were simply ignorant of what had come before. They might not have realized that delegating part of the architecture to a vendor who puts everything on one chip so you can focus on other stuff is just what one *does* now. It’s what Apple did too. You can bring the economies of scale down to smaller operators (smaller than Apple, I mean) by having them build on a common platform, but I don’t get why that platform has to be open source. Why wouldn’t the economics have worked? Near as I can tell they *did* work…for Symbian. Symbian system-on-a-chip phones are my existence proof that an open-source platform was *not* a necessary condition.

>The first phone that shipped using the Qualcomm Snapdragon was running Windows Mobile, not Android.

Well that’s OK, because I wasn’t talking about Snapdragon.

>Symbian system-on-a-chip phones are my existence proof that an open-source platform was *not* a necessary condition.

*Guffaw* Oh, man, did you choose the worst possible example for your thesis.

Symbian was way smarter than you. They open-sourced their OS because – and I speak from certain knowledge here, having heard it directly from the Nokia exec who drove the decision – they ran the numbers and concluded that no one company could afford the amount of engineering talent required to do all the hardware ports they knew they needed.

One of the principal drivers for open sourcing here in the 21st century is that hiring the people to do software development at scale is really, really expensive. Even a decade ago Symbian understood that it was no longer possible to achieve their objectives without more cost-spreading and risk-buffering than is possible inside a closed-source model. The requirements for a competitive smartphone OS today make it larger and more complex than Symbian was, making closed-source development even more of a non-starter (see recent horribly botched updates by both Microsoft and RIM for empirical confirmation).

Yeah, I know, Apple. They get away with closed source for the iPhone because (a) they co-opted an awful lot of open-source work through Darwin, and (b) they strictly control the number of hardware configurations they have to deal with. The trouble with being Apple is that your portfolio of hardware options absolutely has has to stay limited, because otherwise you end up in the same bind Symbian discovered. Thus you end up vulnerable to a swarm attack by a platform that can support more different product bets and price/capability tradeoffs than you can exactly because it can cost- and risk-spread the OS development costs in a way you cannot.

@Glen Raphael:

Obviously, there are tradeoffs involved in productizing silicon, and at some process nodes, with a couple of small operating systems and different markets, it makes sense to make a single piece of silicon with multiple ROM images. (And it may always make sense to include ROM for a separate dedicated baseband processor.)

But at some point, with higher volumes in a single OS, it probably makes sense to reduce the ROM size. And then once the OS doesn’t fit in ROM anyway and is subject to change (so it can’t be in mask ROM), and the logic process doesn’t support flash very well (so it’s more cost effective to put reprogrammable NVM in an external chip), and the OS requires 256MB of RAM just to run applications (so you’re nowhere near a single chip solution any more), it might make sense to eschew ROM almost completely (except for a bootloader) for the UI processor, and just insure there is enough cache and local RAM to run the OS nicely.

I don’t know for a fact that modern day Android chips don’t have any OS in ROM, but given that most of them can apparently be rooted, I strongly suspect that any ROM addressable by the UI processor over and above that needed to boot the thing is a prime target for cost reduction removal.

Ken Burnside says:

> If those countries are happier in an objective way, how much immigration do they get, relative to the United States?

Curiously Ken, that is almost exactly the argument that I responded with.

Many see Apple’s limited hardware configurations as a plus, not a minus. Despite the regular jibes directed at Apple for just being about marketing, what Apple does contradicts the standard marketing department wisdom. It’s the traditional firms that come out with a slew of barely-differentiated models, which can confuse users and lower profit margins through added expenses and less economy of scale. The swarm of Android phones is both a strength and a weakness.

Has anyone posted this link? iPhone share of phone market in Q1: 5% volumes, 20% revenues, 55% profit

>Many see Apple’s limited hardware configurations as a plus, not a minus.

Sure. The advantages are obvious, beginning with limiting their tech support and software maintainance costs. What we’re seeing is an extended experiment in whether that advantage, minus the inability to place multiple product bets at different price-vs.-capability tradeoffs, nets out to a positive.

@Jessica, Ken:

Not that I actually have a strong opinion one way or the other on the happiness / immigration question, but if you were going to measure happiness via immigration differentials, don’t you have to control for the differences in immigration policies? I suspect that comparison would be tricky because, among other things, most (all?) Western European countries have significantly more restrictive immigration policies than the US does, even now. To take an extreme example of this, Singapore’s population is over 40% foreign born because of very liberal immigration policies. I don’t think that means Singapore qualifies as one of the happiest countries on earth.

Not curious; rather, I think obvious to anyone trained to look for objective confirmations of claims. (I said basically the same thing, but I think the filter ate it.)

I think we’re talking past each other because we’re talking about different time periods. If you mean *real* open source, Symbian did at one point quite recently try to “open-source their OS”, basically as a too-late hail Mary pass after they’d gotten hopelessly behind the state of the market. The system-on-a-chip Symbian phones I’m talking about were released in 2007, well before the Symbian Foundation was launched (2009) or Symbian was officially available as open source (February 2010)…and once it was open sourced it was obvious it wasn’t going to work by November and Nokia gave up hope on the open-source model. Incidentally, just last month, Nokia officially took all development back in-house, reverting to the earlier group-collaboration model where they share limited amounts of code with licensees and charge money for the product. Quote:“By April 5, 2011, Nokia ceased to open source any portion of the Symbian software and reduced its collaboration to a small group of pre-selected partners in Japan” And yeah, the old code’s still available in repositories, but realistically nobody ever wanted it so nothing will come of that.

(If you’re thinking about an earlier period, Symbian itself was a private consortium that was, yes, designed to spread risk exposure among the major contributors. But it wasn’t at the time in any sense “open source”.)

The problem with Symbian wasn’t that it was mostly-closed source so much as that it was *bad* mostly-closed source. Symbian contracted a near-fatal case of what I’ll call the Microsoft Disease. You had early success with some platform, so naturally you try to *maintain compatibility* as you add more bells and whistles and barnacles until the original structure can barely be seen. Eventually the only decent thing to do is take the code out back, shoot it in the head, and start over with a simpler base. Forget compatibility. But once you’ve got an “ecosystem” going it’s hard to do that. Symbian got locked into some bad initial design decisions and became an ugly unmaintainable mess that only a mother could love. (they actually did try to rewrite/re-architect a few times, but never from a clean slate and it never “took”.)

Still, Symbian remained tolerably successful and did produce vast swarms of cheap phones with minimal vendor input. And probably could have continued doing so for who knows how long if the Apple/Android juggernaut hadn’t wrenched control back from the carriers and shown the world that phone software could actually be good.

I think it does.

Certainly, that 40% thinks they’re happier in Singapore than wherever they came from. I don’t think I personally could be happy in a country with the sort of draconian criminal laws they have, but clearly a metric assload of people have different priorities.

About Happiness

Happiness research is indeed all about the subjective feeling: You simply ask people how happy they feel. Curiously, the results are very consistent and reproducible. As even the researchers did not believe them, they correlated these results with every “objective” measure of happiness they could think of. And it showed that simply asking was the best way. Before you start measuring, you can write a book about all the ways an empirical study can fail. However, you only really know after you actually do the study. The happiness question proved to be very good.

There is the problem what people mean when they say they feel happy. But as the interviewers are human themselves, they might have a shared vocabulary.

Now with respect to immigration, you all make some hidden assumptions and an obvious error (which is only obvious with hindsight).

– Do prospective immigrants know where people are most happy? No, they do not.

– Is this happiness shared with immigrants? Will a third world immigrant become as happy as the natives? Not likely

– Can prospective immigrants actually go to the happiest place (eg, Iceland)? Not really

But the biggest error people make is:

– Do people actually know what will make them happy? A definite NO

– Will people do now what they know will make them happy later? Again, a definite NO

Good examples are addictions and unhealthy lifestyles. People actually know they will become very, very unhappy if they drink or eat too much or if they gamble, or into debt. They know that their life will become very miserable if they go after this woman/man. But they still do.

On the other hand, what makes people happy is getting in a stable relation, get children, and lead a social life with lots of friends. But instead, they get a career. They work too much, do not see their children, spouse, relatives, and friends, but only their office mates. They get into an affair that will break up their family, drink too much, eat too much etc. In total, they lead an unhappy life out of free will.

So it is with immigrants. What they are after is money, because they are convinced that will be best for them (and their families). So they went to Libya, or Saudi Arabia, even though they know they will never be happy there.

Obviously, the argument “the numbers do not lie” can only be interpreted right if you have looked into the underlying methods. That is what a statistician should do. It is the most important and difficult part of being a statistician: You give me these numbers, but what do they actually mean?

“The first-quarter revenue was an all-time record for Intel fueled by double-digit annual revenue growth in every major product segment and across all geographies,” Intel President and CEO Paul Otellini said in a statement.

http://news.cnet.com/8301-13924_3-20055353-64.html

The PC market, by contrast, declined last quarter. Global shipments fell 3.2 percent, hurt in part because some consumers bought tablets instead, research firm IDC reported last month.

http://www.bloomberg.com/news/2011-05-18/apple-ipad-s-buzz-saw-success-cuts-into-pc-sales-at-hp-dell.html

Where are all those excess Intel processors going?

“Do prospective immigrants know where people are most happy? No, they do not.”

They may not know where people are most happy, but it is unlikely that they would move to a country their earlier arriving friends or relatives did not praise.

“Then he went on to tell me how bad the USA was because all those socialist European countries always score higher on league tables on happiness. I said that those tables were obviously biased to the particular definition of “happiness” chosen. ”

Also, stipulating those countries are happier, why would one conclude that “socialism” is the main reason? Perhaps its relative ethnic or cultural homogeneity?

@JB

“They may not know where people are most happy, but it is unlikely that they would move to a country their earlier arriving friends or relatives did not praise.”

You mean, like Libya and Saudi Arabia? Immigrants are mostly attracted by income, not happiness.

And you don’t think that people attracted by income believe that income will help them be happier?

@The Monster

“And you don’t think that people attracted by income believe that income will help them be happier?”

Sometimes. But are they right? Does money make you happy?

No, it helps me to be happier. Money is a tool. I can use it to purchase things that make my life more pleasant than it was without them. Like any tool, if used incorrectly, it won’t do the job.

Furthermore, engaging in productive work that earns me money directly helps me be happier, separate and apart from the value of the money itself. I believe that part is actually hard-wired into us. According to Cesar Milan, something similar is wired into dogs as well.

@The Monster

“engaging in productive work that earns me money directly helps me be happier, separate and apart from the value of the money itself.”

So, my point was 1 people select migration targets for money and work 2 people generally do not know what will make them happy 3 a country with happy people will not necessarily induce happiness in a immigrant. Therefore, migration patterns are not a good measure of happiness.

Not an experiment, a proven business strategy. Since doing the same to their Macintosh line (there are only ever two or three models each of desktop and laptop Mac) Apple has become the largest single PC vendor.

Strategically limiting choice is integral to the Apple Way, and has been for a long time.

@Jeff Read

“Not an experiment, a proven business strategy.”

And we know the outcome: Large profits, low market share.

The “multiple product bets at different price-vs.-capability tradeoffs” will dominate the market because of the low margins (=price) and high diversity. Not everyone can and wants to drive a Mercedes.

Point 1 is over simplified. People select migration targets for a variety of reasons, of which income potential is certainly one, but not everything. As I said before, I don’t believe the chance to earn a lot of money in Singapore would be enough to make me put up with the restrictions on my liberty. (But I’m willing to entertain the possibility that someone would offer me such an absurdly large amount of money I’d be willing to put up with those restrictions for a limited time, such as a million dollars for a one-month job.)

Point 2 moves the goalposts. Of course people lack perfect a priori knowledge of whether certain things will make them happy. But others are even less likely to know what will make me happy than I am. And over time, our decision loop feeds back data from how well our prior choices did at maximizing happiness. Even when we make choices that turn out to produce less happiness in the short term, if we can learn from those mistakes, we’ll make better choices in the future. But imperfect knowledge is not NO knowledge.

Point 3. “Not necessarily” is just another way to say that since it isn’t 100% always the case, there exists no correlation at all.

Fail.

@The Monster

In short, do you think migration streams correlate well with happiness in the receiving countries?

I think there is very little correlation. Too little to be of use to rank countries on happiness.

Yes, I think there’s good correlation.

I think there was especially good correlation when we divided Germany and the Soviet bloc had to build a wall manned with guards under orders to shoot people trying to flee the Glorious Workers’ Paradise. You could argue that the Osties wanted better-paying jobs, and I’d agree that might have been part of the equation, but it’s just part of them being very unhappy and wanting to be happier.

@The Monster

“You could argue that the Osties wanted better-paying jobs, and I’d agree that might have been part of the equation, but it’s just part of them being very unhappy and wanting to be happier.”

But can you conclude that Germans were happier than Swedes, Swiss, Norse, Brits, and French because these Ossies migrated to Germany instead of their country? And Mexicans migrating to the USA instead of Iceland means that the people in the USA are happier than those in Iceland or Canada?

Patrick: Aren’t those extra Intel processors going into Macs? Their laptops and desktops are doing well.

Winter: Except that Apple’s share of both PCs and phones has been increasing for years. True, there may be an upper limit to the market share you can reach with that strategy, but they haven’t seem to have reached it yet.

@PapaySF:

> Aren’t those extra Intel processors going into Macs? Their laptops and desktops are doing well.

Extremely well, but not enough to account for that discrepancy. They shipped 3.76 million, up 28% YOY out of about 100 million PCs, and Intel and AMD both report growing sales. I think this is just another example of IDC not knowing one hole from another, but would be interested in alternate explanations.

You have to look at such things in context. First of all, there’s the question of the range of choices available to someone. Because it was policy in BRD that any Ostie could claim citizenship in the West by setting foot there, it made it easier for them to move there than any other country, even Austria or Switzerland where there would be no language problem. To move to the countries you list, they’d have to get a visa.

But I think it’s very clear from the lack of “Westies” wanting to move to DDR, and the need to threaten Osties with death if they tried to emigrate, that a lot of people felt they were happier in BRD than DDR.

Applying it to the Singapore situation, we’d have to factor in what other countries were available as emigration targets for each person, but having such a large immigrant population is pretty convincing that at least they’re happier there than they were wherever each one came from (or they’d go back).

I’m not going to touch happiness and migration, but money and happiness are highly correlated if you are poor.

There has been a ton of studies on the money vs happiness subject, easily several each decade (it’s a really popular research subject), and they all conclude that money equals happiness for the poor, but that money is irrelevant to happiness for the rich.

The crossover point is somewhere in the middle class income range, for the given locality.

Migration is certainly from a place where a person feels unhappy to a place where they hope things will be better.

If the above isn’t true, why on earth would they have moved. They may have been unhappy in the old place because someone was threatening them with a sharp object, or because they saw an old girlfriend too often for comfort.

The new place may hold out the hope that there will be fewer sharp objects brandished at them or the hope that the old girlfriend will be absent. It’s still gonna be , . Bad and better are going to be quite subjective in many cases.

Money, laws, friends, social environment are all factors in the above,

It’s still gonna be [old, bad somehow], [new, hoped to be better than old]. Bad and better are going to be quite subjective in many cases.

Geeklog ate my attempt at markup. Try again on this sentence.

In MP3 players: large profits, large market share. “The marketplace” is not the same entity for every class of product.

Again, Wilkinson and Pickett. Income equality is the single biggest predictor of social harmony and the physical and emotional health of its citizens. I don’t know how well it correlates to something as subjective as “happiness”, but social organizations that favor income equality seem to give everyone a better life.

Inspired by you. Full text below.

CATHEDRAL AND BIZARRE, Waterloo, Monday (NTN) — Research in Motion have broken new barriers with the PlayBook tablet, a BlackBerry that can’t read email. And needs to be tethered to a phone.

“We feel a technology preview is just the thing we need to fight iPhone and Android in the consumer market,” said founder and co-CEO Mike Lazaridis. “The missing core functionality should be seen as areas of spectacular potential. Also, the board has ascertained that you should stay away from the brown acid, it’s not so good.”

The PlayBook has launched remarkably, with thousands of the devices being recalled for crippling operating system bugs straight after release.

In a double-tap Osborne through the head, the PlayBook uses the new QNX BlackBerry OS, which does not run current BlackBerry apps, will not be available on phones for another year and will not work on any current BlackBerry device. This is separate from OS 7, to be released soon, which will also not work on any existing BlackBerry. RIM’s present mobile carrier partners were “overwhelmed” to be stuck with so much already-obsolete stock.

RIM led the world into the smartphone era, several years before Apple’s iPhone turned everyone into the sort of twat you only ever used to see carrying a BlackBerry.

Technology industry rumours suggest a Microsoft takeover of RIM, considered an excellent match in competence and vision. “Synergy’s just another word for two and two makes one!” said Steve Ballmer. “We will assimilate your technological stench of death into our own.”

>“Synergy’s just another word for two and two makes one!” said Steve Ballmer. “We will assimilate your technological stench of death into our own.”

LOL. Most convincing fake Steve Ballmer quote EVAR.

Apparently Google figured out how to fix their Android security issue on the server side:

http://blogs.computerworld.com/18308/google_android_security_flaw?source=rss_blogs

Winter @May 18th, 2011 at 11:31 am:

MIgration patterns reflect relative attractiveness of societies when other factors are equal. People go where they think they will be happier.

If there is a natural disaster in progress in country A, people will move somewhere else, regardless of “happiness”. People are going where they think they will be – rather than not be.

If entry to a country is effectively prohibited or restricted, migrations will flow elsewhere.

If entry to two countries is prohibited, but migrants make strenuous efforts to overcome the barriers of one country but not another, it’s because they expect to be happier in the first country.

The difficulties of movement also affect flow. Mexicans can migrate to the U.S. by walking. They can’t get to Iceland except by a much greater and more difficult effort.

However, there are other groups for which the U.S. and several other countries are comparably difficult to reach and enter, and which have comparable internal conditions – and to which they migrate in comparable numbers. Somali emigrants (many considered refugees) go to the U.S. – and also to Finland.

@Rich Rostrom

“MIgration patterns reflect relative attractiveness of societies when other factors are equal. People go where they think they will be happier.”

No Nigerian, Philippine, or Indonesian goes to work as a bond slave in Libya or Saudi Arabia expecting to be happy there. They do it expecting to earn money to feed their family. And to get out the moment they have earned enough money to survive at home.

The original point was that migration patterns were correlated to the happiness of the natives of the receiving countries, and that a country with more happy natives would receive more immigrants. So you could determine how happy the natives are from how many immigrants go there.

My sole point was that that you cannot do that.

So if there were better-paying jobs in Nigeria, the Phillippines, or Indonesia, those people could stay at home and be happier. But those countries don’t have the jobs.

Those people take those jobs expecting to be happier than if their kids starve. Seems like a reasonable expectation. I think about the hardest thing on a parent is to have to bury a child. Definitely not a happy-making thing at all.

More on the undead phone OS. WP7 is set at 1.6% market share.

Microsoft fails to turn punters on to WinPho 7

Only 1.6m smartphones based on MS’ new OS sold in Q1

http://www.reghardware.com/2011/05/19/microsoft_windows_phone_7_q1_2011/

@Winter:

As I mentioned here, Canalys says that Windows only shipped in 2.5 M phones for the quarter. Interesting discrepancy. I guess Canalys doesn’t get as much Microsoft money as Gartner.

Any comment on this? If accurate, it looks like Amazon might be trying to see if they can be a bigger asshole with their Android app-store than Apple is.

@William B Swift:

That’s the least of it. That guy happened to notice a molehill, but if he was paying attention, he might have seen the mountain. Here’s a starting point:

http://igdaboard.wordpress.com/2011/04/14/important-advisory-about-amazon%E2%80%99s-appstore-distribution-terms-2/

Buy a Windows phone, get a free Xbox!

http://www.eweek.com/c/a/Mobile-and-Wireless/Verizon-HTC-Trophy-Windows-Phone-Could-Alter-Microsofts-Prospects-421638/

Misread the article. Thought it said “game console” but it said “console game.”

So they’re not that desperate. Yet.

AYBABTU:

http://www.google.com/support/androidmarket/bin/answer.py?hl=en&answer=1306490&topic=1100171

I see “Failed to fetch license for [movie title] (error 49)” when I try to download a movie

You’ll receive this “Error 49” message if you attempt to play a movie on a rooted device. Rooted devices are currently unsupported due to requirements related to copyright protection.

Sure that you’ve never rooted your device? Submit a bug report

@not:

Thus showing that google itself (not just netflix) has to pay lip service to the idea of making water not wet, now that they have debuted their movie service.

Or, perhaps more to the point, even if (which is one of my hypotheses) google doesn’t really care to be in the movie business, and really just wanted to get netflix moving, they had to negotiate with the studios in order to get that far, and in that process, they had to come to understand the depths of paranoia that netflix has to deal with.

In any case, they have to pretend like they care about DRM.

Because the Netflix app will work on your rooted phone, unlike Google’s movie app?

stunning conclusion coming up in 3…2…1…

I have nothing more to offer on this topic I have not already said nor can I do better than the bitter words coming from what once were *Android’s biggest fans*.

http://www.androidcentral.com/google-movies-blocked-rooted-devices

If this were Apple and the iPhone, the equivalent would be doing something to piss off the checkbook liberals who drive the Audi to work and take the Subaru when they have to shuttle the children to karate practice.

Android is not open. Has never been open. Yes, never. And Google has always sold out the hard core geeks and the rabid web-is-open, information-wants-to-be-free, code-is-poetry acolytes for just one more person to access just one more service and offer up just one more sliver of data to be presented with just one more advertisement on which to click.

And now that Google is no longer only ‘aggregating’ other’s content but actually buying, selling and distributing it, you, dear geek, mean even less to them then you once did.

Which was next to nothing.

Apple Buys patents from freescale

Just recently saw this, thought it was interesting.

Fixed that for you. It’s amazing the number of people who see those two things as the same thing.

It may however dampen the value of cyanogenmod. As a question for those who’ve played with it(i haven’t, haven’t had time)? Does Cyanogen require a rooted phone?

>Does Cyanogen require a rooted phone?

Yes, it does.

‘exp(1)’ is ‘e’ (in gnuplot).

Something like this?

>Something like this?

“Undefined value during function evaluation”

I think there needs to be an offset constant term as well.

As i suspected. So yes, it dampens the value of Cyanogen.

Off topic

Update on the absence of (L)GPL violations in Android. Shock and horror: No violations keep turning up in Android.

Clarification on Android, its (Lack of) Copyleft-ness, and GPL Enforcement

http://ebb.org/bkuhn/blog/2011/05/19/proffitt.html

> Well, that would make sense if they are also supply-constrained, wouldn’t it?

Apple’s semi-consistent 25% share is easier to understand if you look at this:

http://www.asymco.com/2011/05/22/other-vendors-sell-10-of-smartphones-but-30-of-voice-oriented-phones/

Android’s rise is at the expense of Nokia/Windows Mobile, not iOS.

The other point he makes, namely that in 2008, the combined fragmented footprint of Symbian was well over 60% of the market, and that this didn’t ensure the platform’s success, is quite valid.

Android is huge, and getting larger. (And Google will do what it thinks necessary to continue to grow while waving bu-bye to it’s early adopters.)

iOS is also huge, and getting larger.